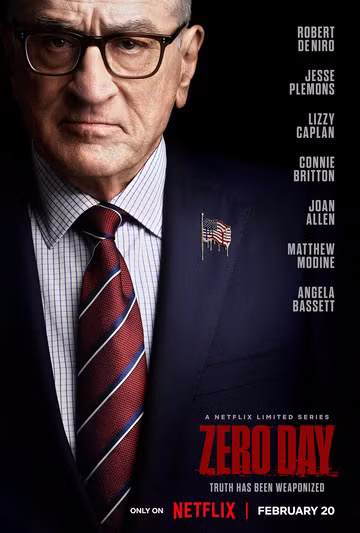

I started watching the Netflix miniseries Zero Day the other night. It’s a political thriller staring Robert De Niro and is built around the investigation of a devastating cyberattack on the United States. I’ve actually only watched the first two episodes so far, and I’m a layperson when it comes to cybersecurity, but as I know computers, a friend asked what I thought about the premise and how accurate (or not) it was. Since I put together a rather lengthy reply, with a number of links to sources, I though I’d share my response.

The jargon so far (e.g., references to PLCs and SCADA systems), as well as the expressed difficulty of simultaneously attacking so many diverse systems strikes me as accurate. And it clearly draws from a lot on events that have taken place. I suspect (and hope) that such a large simultaneous successful attack on such a diverse array of cyber-physical systems (causing them to affect things in the physical world, not just crash or introduce ransomware, or delete data) is quite improbable. That said, there have been large scale successful attacks of a non-physical nature affecting millions of systems at a time, and entities do store up zero day vulnerabilities. So it’s conceivable that a bad actor could accumulate multiple ones, each for a different type of system, to launch simultaneously.

Zero day vulnerabilities take advantage of generally unknown security flaws software, hardware, or firmware. There’s a huge market for zero day vulnerabilities. Good hackers discover them and report them, often for substantial bounties. But there is far MORE money to be made by selling them to private bad actors or to governments. And when the US government discovers a zero day, they sometimes tell the software’s authors, such as Microsoft, but they also sometimes keep it secret to later use themselves.

The part about the zero days originating with the NSA? That’s straight from reality. Back in 2016, Shadow Brokers released a bunch of NSA hacking tools, which were then picked up, modified, and used by the North Koreans, Russia, and the Chinese.

Because we have lots of diverse systems running the same core software, a single attack, or even mistake) can cause widespread and diverse outages. Last year’s widespread outages affecting airlines, airports (but not air traffic control or airplanes), banks, hospitals, stores, and far more was caused by an error accidentally introduced into CrowdStrike’s Falcon cyber security software for windows (rather ironic). It crashed 8.5 MILLION systems and they were temporarily unable to restart. Similarly, NotPetya spread far wider than it’s developers intended, infecting machines across the world with ransomware.

Back in 1994, the US government passed the Communications Assistance for Law Enforcement Act (CALEA), requiring that telephone companies design their systems to be easier to wiretap, and this was later expanded to include internet service providers. At the time, they were told that this was a VERY bad idea. It made work easier for law enforcement, but as was pointed out, these mandatory backdoors could and eventually would be exploited by bad actors. And because the US is such a huge market, the same vulnerable systems were sold worldwide. 20 years ago, Vodaphone Greece was hacked this way. Now, it turns out, that the Chinese are using these vulnerabilities to steal data across the US (but not cause physical damage).

There’s a passing reference in the show to SCADA systems and PLCs being attacked. SCADA stands for supervisory control and data acquisition. These are used to supervise and control sensors and control systems, including pipelines, the electric grid, water networks, etc. As a lower level are PLCs (programmable logic controllers). These control the lower level operations of manufacturing and other equipment (again, such as the electric grid). Stuxnet, which took down Iranian uranium processing equipment, infected the PLCs controlling the centrifuges. This would be the type of target most likely to directly damage or destroy physical systems. And our systems are vulnerable, with Chinese hacking attempts on American pipelines and other systems.

There are FAR too many unprotected or poorly protected systems are connected to the internet. There’s even a search engine, SHODAN, dedicated to searching for Internet of Things and control systems.