A while back, I posted about how I gave the IRS’ volunteer tax preparer exam to ChatGPT. It scored in the 70s, but an 80% is the minimum required score to pass and be certified. A short while later, I took a Coursera short course on Retrieval Augmented Generation (RAG). I then decided to explore RAG further by building my own system, with the additional goal of running it locally on my home PC rather than on cloud servers. Since some of the implementation details and parameters for a RAG system depend on its purpose, I decided to once again develop it as a tax “expert” (at least in theory) and see how it did on the same tax preparer exam. I also built a separate RAG system for our Homeowners Association (HOA) documents, but that’s a story for another post.

Approach and Development

After some online research, Ollama seemed to be the tool to use to run a selection of Large Language Models (LLMs) on one’s local machine. Ollama provides a command line interface, an API, and a simple GUI wrapper for running LLMs. Ollama also automatically takes advantage of any GPU capability found on your graphics card and installs the necessary software behind the scenes.

Beyond Ollama, LlamaIndex seemed to be a good framework for developing a RAG system, and I chose to use it as well. Using a framework adds a bit of complexity for a simple system, but it also provides a great deal of flexibility, making it very easy to change vector encodings, vector data stores, LLMs, etc., without having to change your code, other than to point the LlamaIndex instances to the selected tools.

I found a good video tutorial on YouTube (Building a RAG System Locally with Ollama, LlamaIndex, and Chroma DB) to get me started. I went through the course’s Jupyter Notebook, got everything working, explored some additional options not covered in the course, and then used that notebook as the basis to develop and test my own approach. Once everything was working in the notebook, I used Claude to help write a simple QueryBot to give the test to the RAG system.

Creating the Vector Store

The workflow for using reference documents in a RAG system is shown below. First, you have to load in your documents. In my case, it was the pdf’s of the two training manuals. As part of this step, they were converted to Markdown, which LLMs can analyze and use more easily. Next, the documents need to be broken up into chunks and a semantic vector generated for each chunk. Since the two manuals were well-organized by headings addressing specific topics, I chose to use an algorithm that broke the documents into chunks using the markdown headers as a guide, rather than fixed chunk sizes. This indexing step is time consuming and compute intensive, so you don’t want to repeat it with each use. Therefore, you store the indexed and chunked data into a vector database. These three steps are done once. The querying step can represent two separate actions. First, before actually implementing a full chatbot with an LLM, you can test your system by inputting query text and examining the chunks returned. This can help you assess the value of semantic and/or keyword search, as well as how many chunks should be returned for each type of search, and then how many final chunks should be ranked and passed on to the LLM in the final chatbot. And, of course, as part of processing each query in the chatbot, the querying step is also performed.

There were two documents that I needed to chunk and index using a vector store, so that my RAG model could search on them and then pull the relevant material as context for answering the queries (in my case, the exam questions). The two documents were the NTTC 4012 Volunteer Resource Guide for 2025 returns prepared by the IRS and modified by AARP Tax-Aide and the NTTC 4491 Tax Training Guide for 2025, also prepared by the IRS and modified by AARP Tax-Aide. These documents were available in pdf format. In addition, some of the exam questions have short bullet point notes as scenario information for each question, while others have extensive scenario information in pdf format, including forms, such as W-2’s, 1099’s, receipts, etc. These scenarios aren’t part of the documentation for the RAG, but rather additional information to be included in the query prompts. These are all in the IRS exam book, which is available as a pdf.

For the simple scenarios, I just cut and pasted the interview notes into the final query as additional context information. But this wouldn’t work for the complex pdf’s with forms. So first I pulled out each of the three complex scenarios into their own pdfs. I converted the pdfs into markdown format using LlamaParse so that the LLM would be better able to use them. This gave me one markdown document for each of the longer scenarios that included forms. These documents weren’t stored in the RAG database but were provided as additional context to the LLM to generate the final answer.

From my literature search, I learned that LLMs would be better able to process markdown language, rather than pdfs with embedded tables and forms. In addition, I learned that some LLMs can better convert such pdfs to markdown than non-AI approaches, especially for pdfs with complex tables. So I used LlamaParse with a multimodal model extension to convert the scenario documents. This runs in the cloud, but I used the free tier. The use of the extension costs more tokens but improves the conversion of complex tables. I learned later that there is a parameter that can be set so that LlamaParse only calls the extension (and only charges more tokens) on specific pages with complex tables, but I didn’t know that at the time. Here’s the code that goes through each scenario pdf file in a folder and converts them to markdown:

for file_path in Path("scenarios_pdf").rglob("*.pdf"):

print(f"Processing: {file_path.name}") # confirms loop is entered

try:

table_parser = LlamaParse(

result_type="markdown",

use_vendor_multimodal_model=True,

vendor_multimodal_model_name="anthropic-sonnet-3.5",

verbose=True,

)

markdown_documents = table_parser.load_data(file_path)

print(f" → Parsed: {len(markdown_documents)} document(s)") # confirms parsing worked

full_text = "\n\n".join([doc.text for doc in markdown_documents])

print(f" → Text length: {len(full_text)} chars") # confirms text was extracted

if not full_text.strip():

print(f" ✗ Empty result, skipping {file_path.name}")

continue # don't write empty files

file_name = file_path.stem

path_name = "scenarios_md/" + file_name + ".md"

with open(path_name, "w", encoding="utf-8") as f:

f.write(full_text)

print(f" → Saved to: {path_name}") # confirms file was written

except Exception as e:

print(f" ✗ Error on {file_path.name}: {e}") # catches any silent failuresI had intended to do the same thing for the two large documents, but I ran out of tokens in the free tier, and I didn’t want to have to wait until the next month to finish. So, I used pymupdf4llm instead. Pymupdf4llm is designed to convert pdf’s to Markdown format specifically for use in LLM’s. It runs locally on your machine. Then, once the documents were in Markdown format, they needed to be chunked and stored in a vector data store. For chunking, because these were well structured documents, with headings, I used LlamaIndex’s MarkDownNodeParser, which splits documents into nodes based on the headers in the Markdown document.

Each chunk has a computed vector value that is used for indexing, and the chunks and indices are stored in a vector database. This is done using an embedding model. I chose to use the BAAI/bge-base-en-v1.5 embedder model that is available on Hugging Face. Later, when running the RAG, you need to use the same embedding model that you use here to vectorize the search query.

ChromaDB seems to be a widely used vector database that can be run locally, so I chose it as the backend database for my RAG system. You can create an ephemeral client that only stores data in memory and goes away when you close out the program, or a persistent client that, as the name suggests, persists. I chose the latter so that I can come back and run the querybot at any time, without having to start over and re-vectorize the document store each time, which is a compute-intensive operation. It is easy to set up the database:import chromadb

chroma_client = chromadb.PersistentClient(path="d:/chroma_db")

# chroma_client = chromadb.Client()

chroma_collection = chroma_client.get_or_create_collection("mydocs")

Then the next step is to configure LlamaIndex to link to the Chroma vector store. If you choose a different data store other than Chroma, you would change this code to connect to whatever store you are using (and that LlamaIndex supports):

from llama_index.core import StorageContext

from llama_index.vector_stores.chroma import ChromaVectorStore

vector_store = ChromaVectorStore(chroma_collection=chroma_collection)

storage_context = StorageContext.from_defaults(vector_store=vector_store)

Document Preparation

The steps and code snippets above only need to be run once, and were run separately from the querybot. In my case, I ran them as part of a larger Jupyter notebook that I based on the previously mentioned YouTube tutorial. I haven’t provided the full notebook, because it’s a patchwork of different experiments—portions from the original course, the work described above, and prep work for a separate chatbot.

The Chatbot

Once the vector database was populated and the scenarios converted to Markdown, it was time to develop the Chatbot. For that, I used the LlamaIndex Query Application combined with the Gradio Chat Interface to provide a web-based interface for the user. One step involved choosing the LLM to use. This is very easy to change with just one or two lines of code. Because I’m running this locally and have only an NVIDIA GeForce RTX 3060 Ti graphics card, I was restricted in the size of the LLM I could use and still have reasonable (but slow) response times. I first used the qwen3:8b model, but it seemed to provide overly conservative responses, even with edits to the prompts, so I switched to llama3.1:8b.

The steps in a RAG chatbot interaction are shown in the figure. The User enters a query. The query is then converted into a semantic vector using the same algorithm that was used to index the reference material. Since the query is much shorter than a many page reference document, this takes far less computer time. The vector is then used to search the vector database to retrieve the chunks with the most semantic similarity to the query. Keyword searching can also be conducted, and the results combined. Once the most relevant chunks are retrieved and selected, they are passed on to the LLM. The LLM then uses those chunks, along with its general training knowledge, to generate a response which is provided back to the user.

To select which chunks are relevant to the question, I used a combination of semantic and keyword searching, using bm25 as the search algorithm. I played around a bit with the top_k settings for both search types and for the final, merged selections before settling on the current values as generally sufficient to include all relevant chunks while not being too large and requiring too much time to produce answers.

One interesting development in testing was the discovery that LlamaIndex’s query pipeline seems to reformat and edit the retrieved chunks before passing them to the LLM. I discovered this when the chatbot kept getting a simple question wrong, even though I know it was addressed in the reference material. With some debugging, I found that the retriever was including the right chunks in the top_k and ranking them highly. But those chunks weren’t being passed along to the LLM. For that reason, I commented out the section of the code that uses LlamaIndex to build the query, and instead built it with my own def query() function.

Testing also revealed that at least the qwen3:8b model seemed to be overly cautious. For example, given information that someone was blind, it still concluded that they couldn’t claim the extra deduction for being blind because the information didn’t specifically say whether or not they were blind on the last day of the year, which is an IRS requirement. This led to several adjustments and expansion of the instructions part of the query. I didn’t go back to recheck if this was necessary after I switched to a different LLM. The final prompt I ended up with was:

prompt = (

f"You are a trained, expert tax preparer.\n\n"

f"TAX REFERENCE MATERIAL:\n{context_str}\n"

f"─────────────────────────────────────────\n\n"

f"QUESTION: {question}\n\n"

f"INSTRUCTIONS: Before answering, read ALL chunks in the reference material above. "

f"Identify every chunk that is relevant to the question. "

f"If a chunk is titled or discusses a rule that directly matches the taxpayer's situation, "

f"that chunk must be cited and applied. "

f"Do not ignore chunks simply because they do not mention the taxpayer's filing status. "

f"A rule that applies to 'taxpayers' applies to ALL taxpayers unless the chunk explicitly "

f"states otherwise.\n\n"

f"ANSWER: If taxpayer facts are provided, treat them as completely accurate. "

f"Apply tax rules as they are written — if the taxpayer meets the stated qualifications "

f"for a rule, the rule applies. Do NOT require the reference material to explicitly "

f"confirm every combination of circumstances. "

f"Only answer False or state a rule does not apply if the taxpayer facts fail to meet or "

f"violate a stated qualification. "

f"IMPORTANT: If the reference material contains a general rule that applies "

f"to all taxpayers, that rule takes precedence over more specific rules that "

f"apply to a subset of taxpayers, unless the specific rule explicitly excludes "

f"the taxpayer's situation. "

f"Consider ALL tax rules that may apply to the taxpayer's specific circumstances "

f"including filing status, age, disability, and dependents. "

f"Use the reference material to support your answer and cite specific references used.\n\n"

)

For the web interface, as I mentioned above, I used Gradio. This provides an interactive web page and web server. For this application, in addition to the input query and response, there is a box to enter factual information about the question (the simple scenarios are a good example) AND a box that allows the user to upload Markdown files to be handled the same way (which is what was done for the complex scenarios with tables and documents such as receipts). This is the Gradio section of the code. You can see how little is needed to produce an interactive web page complete with file upload capability. theme = gr.themes.Default(text_size=gr.themes.sizes.text_lg)

with gr.Blocks() as demo:

# ── State: holds the current scenario document text ──────────────────

# gr.State is invisible to the user; it just keeps a Python value

# alive between interactions for this browser session.

doc_state = gr.State("") # starts as empty string = no scenario loaded

gr.HTML("<h1> Tax ChatBot</h1>")

gr.Markdown(

"Ask questions about federal income taxes and get answers. \n"

"The bot is a demonstration and can make mistakes or not know certain information. Always "

"double-check with an autoritative source or a human tax professional before making any decisions based on the bot's answers. \n\n"

"**Commands:** Type `/clear` to reset memory | Type `/quit` for exit instructions"

)

# ── File upload row ───────────────────────────────────────────────────

with gr.Row():

file_upload = gr.File(

file_types=[".md"],

label=" Upload Scenario (.md)",

scale=2,

)

doc_status = gr.Textbox(

label="Current Scenario",

value="No scenario loaded — answering from taxpayer facts and reference material only.",

interactive=False,

scale=3,

)

# ── Chat area ─────────────────────────────────────────────────────────

chatbot = gr.Chatbot(height=500)

with gr.Row():

notes = gr.Textbox(

label="Interview Notes / Taxpayer Facts",

placeholder="Paste or type taxpayer facts here...",

lines=4,

scale=5,

)

msg = gr.Textbox(

placeholder="Ask your tax question here… (Enter for new line, Shift+Enter to submit",

lines=4,

show_label="Question",

scale=5,

)

submit_btn = gr.Button("Send", scale=1, variant="primary")

# ── Wire up upload ────────────────────────────────────────────────────

# When a file is chosen: read it → update doc_state, doc_status, chatbot

file_upload.change(

fn=upload_scenario,

inputs=[file_upload],

outputs=[doc_state, doc_status, chatbot],

)

# ── Wire up chat ──────────────────────────────────────────────────────

# chat_function needs: the typed message, current history, and the State.

# It returns: cleared textbox (""), updated history.

submit_btn.click(

fn=chat_function,

inputs=[msg, chatbot, doc_state, notes],

outputs=[msg, chatbot],

)

msg.submit(

fn=chat_function,

inputs=[msg, chatbot, doc_state, notes],

outputs=[msg, chatbot],

)

print("\n" + "=" * 60)

print("Launching Gradio interface...")

print("=" * 60 + "\n")

demo.launch(

server_name="127.0.0.1",

server_port=7861,

share=False,

inbrowser=True,

theme=theme,

auth=[

("UserID", "Password"),

],

auth_message="Tax ChatBot — Please log in to continue.",

)

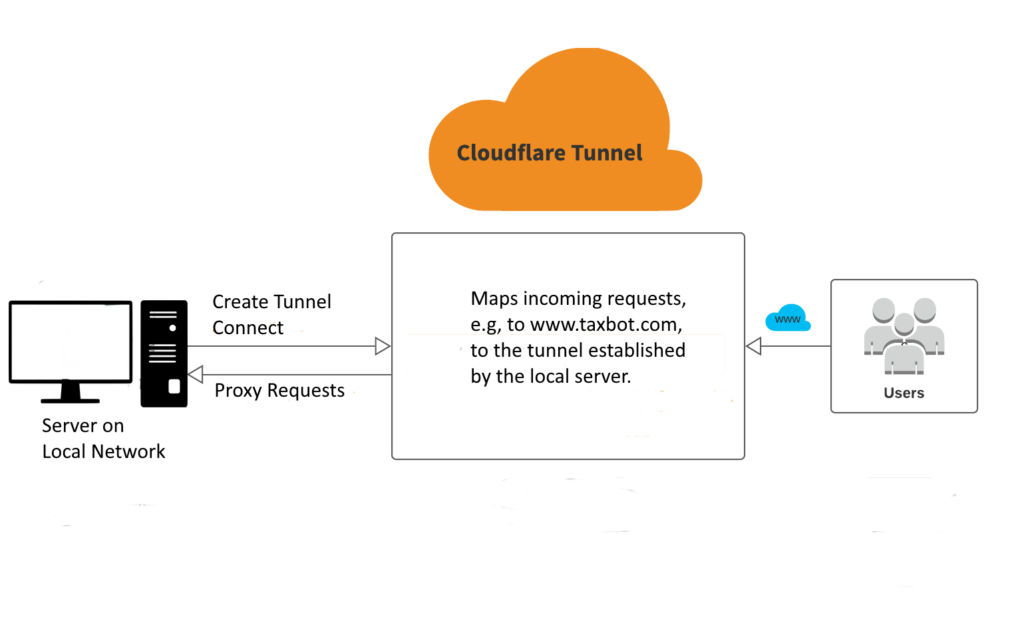

Although the chatbot runs locally on my home PC, I wanted it to be accessible from other devices on the internet. To do that, I used the free Cloudflared service. The only cost was registering a domain name so that I could have the bot associated with a fixed, permanent URL. You can also do totally free testing with changing URL’s provided by Cloudflare. This service sets up a secure outbound-only connection between your local server (or container) and the Cloudflare network using a lightweight daemon named cloudflared. It eliminates the need for open firewall ports, port forwarding, or public IP addresses. This is shown in the figure. Discussion of setting up and using cloudflared is beyond the scope of this write-up.

By setting it up this way, any time I start the taxbot, it is accessible to anyone on the internet with the right user ID and password. The user ID and password check is handled by the gradio code. This isn’t high-level security, but combined with cloudflared, it’s adequate for my purposes.

Use of AI in Development

I used Claude (not Claude Code) extensively in developing the chatbot itself, as well as adding Gradio to provide the tool as a web service and to show me how to use Cloudflared to safely make the tool available over the internet. For the chatbot, I went over every suggestion and made sure that I understood the code thoroughly, as one of the main points of the project was to learn more about implementing RAG models in practice. For the Gradio and Cloudflared portions of the work, I was less concerned with making sure that I understood every detail.

Using Claude drastically lowered the learning curve and allowed me to implement the system much more quickly and efficiently. But it was not without its problems. For example, I wanted to keep displaying the query while the tool was processing, but Gradio temporarily removes it. Claude told me a simple fix to pass the query to the display processing. However, this didn’t change anything, and when asked why in a follow up, Claude informed me that while the change it had told me to make provided the information to the correct routine in Gradio, Gradio is programmed to always clear the query box anyway, and that it would be very complicated to try to change that behavior. There were also several other examples where I had to go back and question a suggested change that Claude recommended or inquire about something that didn’t seem to make sense, and have Claude only then decide that I was right and revise its recommendations.

Results & Conclusions

Not surprisingly, this home implementation was not able to pass the IRS tax preparer basic exam. It scored 63%, when a passing score requires 80%. One of the primary reasons for this is the small, limited LLM that I used due to running the tool on my home computer. Another reason is that being a hobby project, I conducted only limited tuning on the number of chunks returned and the instructional prompt. I’m confident that a professional system with a larger volume of authoritative training documents, a larger and more capable LLM, and more time spent with fine-tuning could pass the exam. I would love to have an opportunity to put that to the test, but all of the commercial tax expert bots I found either didn’t have a sufficient free trial or explicitly prohibited benchmarking in their licensing agreement.

In addition, being limited by my graphics card, it typically took a couple of minutes for the tax bot to answer each question.

Despite these limitations, I was impressed by how well it handled some questions, and how it could both describe its reasoning and present the source documents that it used to reach its answers. Here are some examples:

Example 1: Uber Driver

Query: Part of my income comes as a self-employed Uber driver. Can I take mileage as a business deduction, and if so, how much per mile?

Response: Based on the provided reference material, I will address the question step by step.

Relevant Chunks:

- Chunk 2: Car and truck expenses

- Chunk 5: Car and truck expenses (identical to Chunk 2)

- Chunk 4: Income > Schedule C; or Keyword: SC or BUS

- Chunk 6: D-30 to Front Cover, Schedule C – Questions About Your Business

Analysis:

As a self-employed Uber driver, the taxpayer is eligible for business deductions related to their driving activities.

- According to Chunk 2 and Chunk 5, vehicle expenses can be calculated using actual expenses or the standard mileage rate. The standard mileage deduction includes depreciation, lease payments, maintenance, repairs, gasoline (including gasoline taxes), oil, insurance, and vehicle registration fees.

- Since the taxpayer is a self-employed individual reporting income on Schedule C, they are eligible to use the standard mileage rate for business miles driven as an Uber driver. This is supported by Chunk 4, which states that businesses with inventory, employees, contract labor, depreciation, individual asset purchases exceeding $2,500, business use of the home, and expenses over $50,000 or a net loss are Out of Scope.

- The standard mileage rate for 2025 is 70 cents per mile (Chunk 2 and Chunk 5).

- Commuting miles, parking tickets, traffic fines, or penalties are not deductible (Chunk 2 and Chunk 5).

Conclusion:

As a self-employed Uber driver, the taxpayer can take mileage as a business deduction using the standard mileage rate of 70 cents per mile for business miles driven.

Cited References:

- Chunk 2: Car and truck expenses

- Chunk 5: Car and truck expenses

- Chunk 4: Income > Schedule C; or Keyword: SC or BUS

───────────────────────────────

Sources:

[1] NTTC 4491 TY25 Rel 2.pdf (relevance: 0.017)

## Exercises (continued) Question 4: David, a full-time college student who lives at home, also drives part-time three days a week after school as an independent contractor for Uber. He uses…

[2] NTTC 4012 TY25 Rel 1b.pdf (relevance: 0.016)

## Income>Schedule C; or Keyword: SC or BUS Businesses with inventory, employees, contract labor, depreciation, individual asset purchases exceeding $2,500, business use of the home, expenses over $…

[This response took 110 seconds. The “out of scope” text indicates that those items are beyond the scope of tax returns that the volunteer tax preparers are authorized to handle.]

Example 2: Claiming a Dependent

Query: My daughter is 35 years old and a full time student. She doesn’t live with me, but I pay over half of her living expenses. Can I claim her as a dependent?

Response: Based on the provided reference material, I will address the question:

“My daughter is 35 years old and a full-time student. She doesn’t live with me, but I pay over half of her living expenses. Can I claim her as a dependent?”

Relevant chunks:

- Chunk 1: Rules for a qualifying child of divorced or separated parents

- Chunk 3: Who is a qualifying person?

- Chunk 4: Exercises (continued)

Analysis:

According to Chunk 3, “A qualifying child who was under the age of 13 when the expenses were incurred and who can be claimed as a dependent.” However, this rule does not apply because the daughter is over 13 years old.

The next relevant rule is from Chunk 1: “In the case of divorced or separated parents, the custodial parent (with whom the child lived for more than half the year) can qualify for the EIC regardless of whether or not they claim the child as a dependent.” However, this rule does not apply because the daughter lives with her mother only temporarily and not for more than half the year.

The final relevant rule is from Chunk 3: “Any person who was incapable of self-care whom the taxpayer can claim as a dependent or could’ve been claimed as a dependent except that the person had gross income of more than $5,200 or filed a joint return or that the taxpayer or spouse, if married filing jointly, could be claimed as a dependent on someone else’s 2025 return.” However, this rule does not apply because the daughter is not incapable of self-care.

The last relevant rule is from Chunk 4: Exercises (continued), Question 3. According to this question, “Maureen’s 20-year-old daughter, Angie, lived with her for eight months of the year. Angie is not married and is a full-time college student. Is Angie a qualifying child for the EIC?” The answer is no because Angie did not live with Maureen for more than half the year.

However, in our case, the mother pays over half of her daughter’s living expenses, but the daughter does not live with her for more than half the year. According to Chunk 3: “The qualifying person must live with the taxpayer more than 1/2 the year.” Therefore, the rule that applies is from Chunk 4: Exercises (continued), Question 3.

Answer:

No, you cannot claim your daughter as a dependent because she does not meet the requirement of living with you for more than half the year.

───────────────────────────────

Sources:

[1] NTTC 4491 TY25 Rel 2.pdf (relevance: 0.017)

## Rules for a qualifying child of divorced or separated parents The child cannot be claimed as the qualifying child for EIC purposes by more than one taxpayer. In the case of divorced or separa…

[2] NTTC 4012 TY25 Rel 1b.pdf (relevance: 0.016)

## Who is a qualifying person? – A qualifying child who was under the age of 13 when the expenses were incurred and who can be claimed as a dependent. However, see the caution below. Only the …

[This response took 90 seconds. NOTE THAT THIS ANSWER IS WRONG!!! A qualifying relative does not have to live with you all year, provided that they make less than a certain maximum income. A daughter is a qualifying relative. Apparently, the taxbot focused on the reference material that specifically referred to children and did not pull chunks dealing with “qualifying relatives” even though a daughter is a qualifying relative. If a query specifically states that the person is a qualifying relative, then the taxbot gets the correct answer, but in that case, the questioner will probably already know the answer.]

Example 3: Home Office Deduction

Query: I’m self-employed and use part of my home as a home office. Can I claim a deduction for that, and how does that work?

Response: Based on the provided reference material, I will address the question about claiming a home office deduction as a self-employed individual who uses part of their home for business purposes.

Relevant chunks:

- Chunk 4: Other terms to know (specifically, the definition of “Home office”)

- Chunk 4: Self-employment tax: (mentioning that the software will automatically calculate the required tax and the adjustment to income for the portion of the tax that is deductible)

- Chunk 4: Net earnings from self-employment: (mentioning that net earnings represent “compensation” for IRA contribution and deduction purposes)

According to Chunk 4, a home office deduction may be claimed if the part of the home used for business meets one or more of the following conditions:

- Exclusively and regularly as the taxpayer’s principal place of business;

- Exclusively and regularly as a place where the taxpayer meets or deals with patients, clients, or customers in the normal course of a trade or business;

- In the case of a separate structure that is not attached to the home, in connection with the trade or business;

- On a regular basis for certain storage use;

- For rental; or

- As a daycare facility.

Since the taxpayer uses part of their home as a home office, they may be eligible for this deduction. However, it’s essential to note that the home office deduction “may be limited and is always out of scope” (Chunk 4).

Regarding the calculation of the home office deduction, the reference material does not provide specific guidance on how to calculate it. However, it mentions that the software will automatically calculate the required tax and the adjustment to income for the portion of the self-employment tax that is deductible (Chunk 4: Self-employment tax:).

To determine if the taxpayer can claim a home office deduction, I would need more information about their specific situation, such as how they use their home office and whether it meets one or more of the conditions listed above.

However, based on the provided reference material, I can conclude that:

- The taxpayer may be eligible for a home office deduction if they meet one or more of the conditions listed in Chunk 4.

- The software will automatically calculate the required tax and the adjustment to income for the portion of the self-employment tax that is deductible (Chunk 4: Self-employment tax:).

- Net earnings from self-employment represent “compensation” for IRA contribution and deduction purposes, which may be relevant if the taxpayer wants to claim an IRA deduction (Chunk 4: Net earnings from self-employment:).

───────────────────────────────

Sources:

[1] NTTC 4491 TY25 Rel 2.pdf (relevance: 0.017)

## Self-employment tax Self-employed taxpayers can deduct a portion of their self-employment tax from their income. Self-employment Tax is covered in the Lesson 27 – Other Taxes. The self-empl…

[This response took 102 seconds. Again, the reference to “out of scope” is because, as noted in the reference material, the volunteer tax preparers aren’t authorized to prepare returns that include home office expenses. The IRS sets up rules every year for what is “in scope” and “out of scope” for these volunteers.]

Code

The full code for the taxbot is available for reference on GitHub at github.com/ViennaMike/Taxbot/ You will need to replace the placeholders for UserID and password in the code. The code will also accept a list of UserID, password tuples. You would also need to download the two volunteer tax guides from the internet and go through the pre-processing steps described above to chunk the documents and put them in a vector store database. The two documents are also available on GitHub as pdf documents.